Why Deterministic AI Chips Matter — Groq LPU & a Potential Nvidia Deal

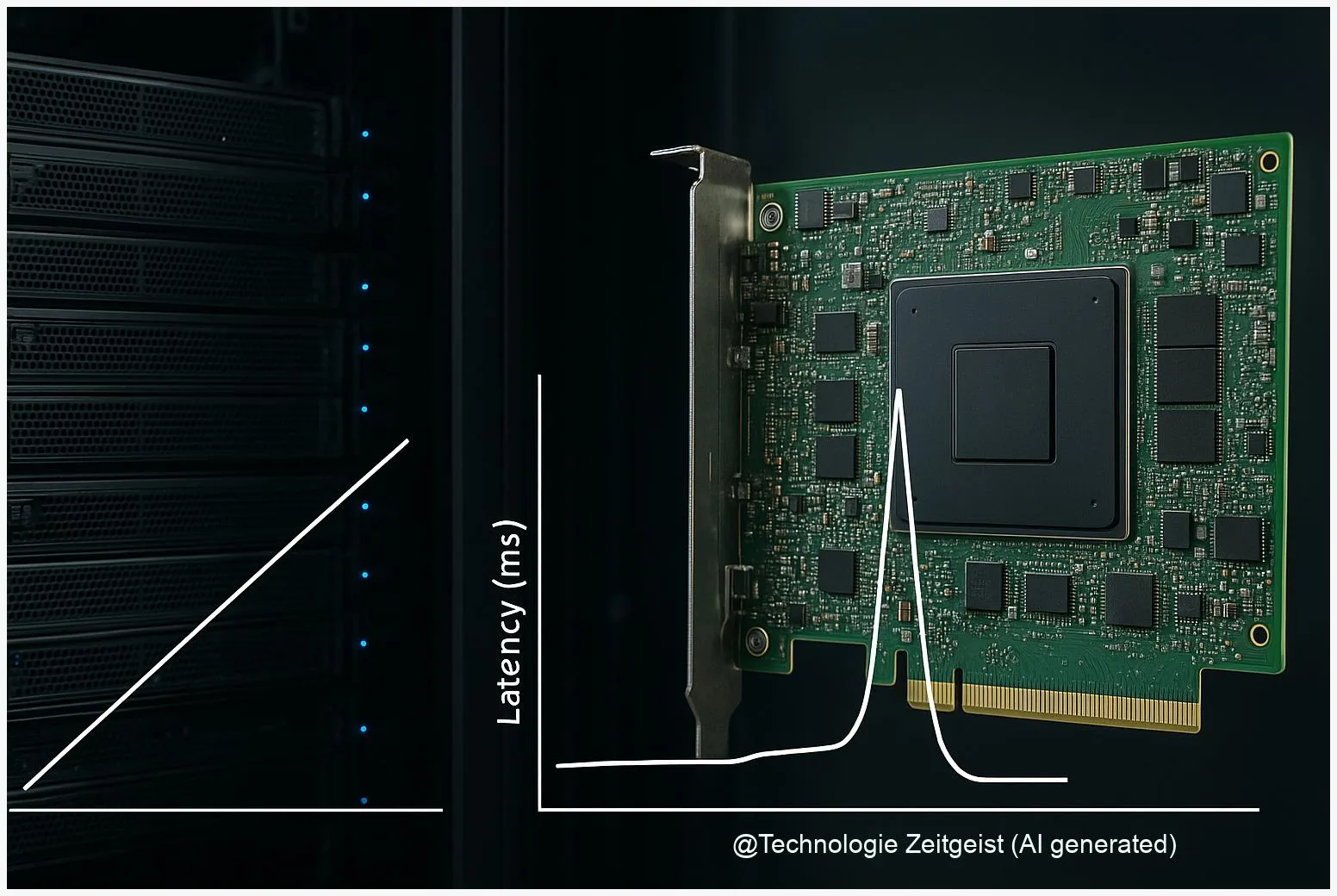

Deterministic inference hardware can make AI responses faster and more predictable for live services. The Groq LPU is presented by its maker as a Language Processing Unit aimed at low-latency, low-variance inference; the device and its claims are discussed here with practical context.

What is an LPU?

A Language Processing Unit optimizes token-by-token workloads for steady per-token timing.

Why determinism matters

Reducing variance in per-token latency improves perceived responsiveness for streaming applications.

Potential Nvidia involvement

If a major vendor integrates this technology it could speed adoption but raise competition and measurement concerns.

Leave a Reply