Choosing the right model for each query can cut delays and costs while keeping quality high. LLM Routing directs each request to the most suitable language model—small and fast for routine tasks, larger and more capable for the hard ones. This article clarifies when routing helps, how common routing methods differ, and practical rules to run a reliable LLM routing setup in production.

Introduction

When a messaging app answers a user or a support bot triages an issue, every request travels to a language model. Many services use a single model for all tasks, but models differ in cost, speed and strengths. Model routing is the practice of sending each request to the model that best balances the task’s need for speed, accuracy and expense. The practical aim is straightforward: avoid overpaying or waiting when a cheaper model would do, and reserve the most capable models for queries that need them.

Think of a music player that picks bitrate depending on the network. The user still gets music, but streaming adapts to context. Model routing applies the same idea to language models: choose a modest model for routine summaries, escalate to a stronger model for complex reasoning or high-stakes answers. Implemented well, routing reduces average latency and cloud costs while preserving user experience.

How LLM Routing works

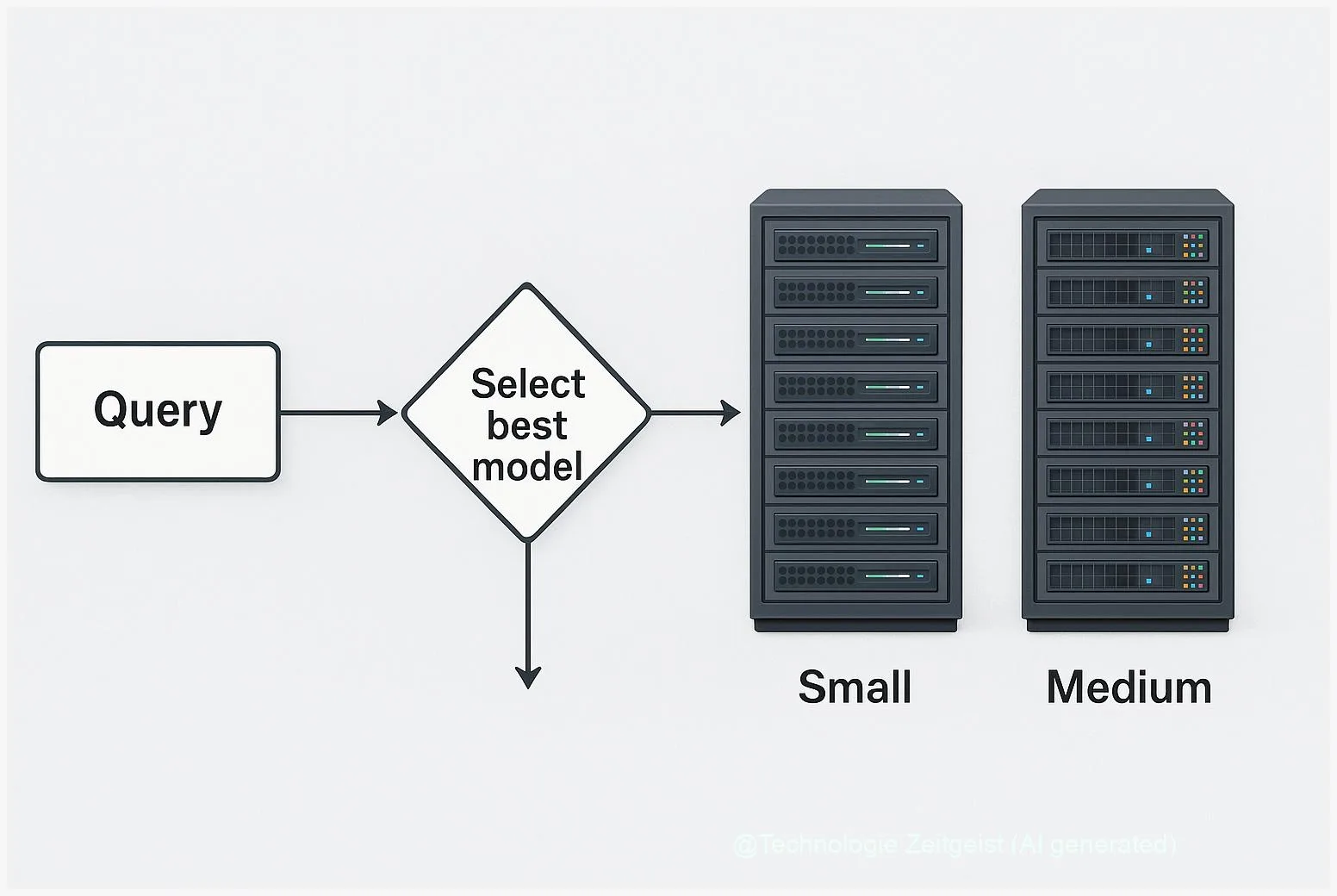

At its core, LLM routing is a decision step between the incoming request and the model call. The router inspects the prompt and chooses which model or processing chain should handle it. Two widespread approaches are used in production: prompt-based classification and semantic (embedding) routing.

Prompt-based classification sends the request (or a short summary) to a lightweight classifier—sometimes itself an LLM—and asks which model to use. Advantages: easy to implement and flexible because the classifier can learn to recognise many intents. Disadvantages: it adds an extra model call, which raises latency and cost unless the classifier is very cheap.

Semantic routing uses an embedding—a numeric fingerprint of the text—and finds the nearest pre-tagged examples in a vector index. If an incoming prompt is close to examples marked “translation” the router sends it to the translation model. This method is deterministic and often faster and cheaper at scale because embeddings can be computed once and similarity search is efficient.

Simple routers decide by label or similarity; robust systems also log the decision, measure accuracy and provide a safe fallback when the router is unsure.

The table below summarises key differences.

| Feature | Description | Typical trade-off |

|---|---|---|

| Prompt-based classifier | Router asks a small model or LLM to label the request. | Flexible labels, higher runtime cost |

| Semantic routing (embeddings) | Uses vector similarity to map requests to tagged examples. | Lower per-request cost, more deterministic |

More advanced routers mix both ideas. For instance, an embedding-based router can hand ambiguous requests to a small classifier. Popular open-source toolkits now offer router patterns: for example, LangChain provides an LLMRouterChain pattern that performs a prompt-driven routing decision and forwards the request to a mapped destination chain. Product teams commonly add a default chain for safety and logging to make routing auditable.

Everyday examples and simple recipes

How does routing look in practice? Here are three concise recipes you can map to real products.

1) Consumer chat app: Most messages ask for information, short edits or casual conversation. Route these to a small fast model. When the user requests code generation, legal wording, or long-form creative work, escalate to a larger model. The router can be a tiny rule engine (keyword + length) or a low-cost classifier.

2) Customer support: Triage incoming tickets. If the text includes a product name and a clear error code, send to an automated KB-answering chain. If the language is ambiguous, route to a human-in-the-loop workflow or a stronger model that can safely ask clarifying questions. Track the fallback rate—high fallback means the router needs better examples or a different threshold.

3) Data pipelines and analytics: For batch summarisation, pre-compute embeddings and group similar documents. Use the same embedding index to choose whether a cheap summariser suffices or a high-accuracy summariser is required for outliers or critical reports.

Implementation tips: keep routing logic visible (version your prompts, store the chosen destination per request, sample routed outputs regularly). Test the router on a realistic set of 500–1,000 real prompts to estimate misrouting rates before wide rollout. If you use an LLM as router, monitor the extra cost per decision and consider caching common routing answers.

Opportunities and risks

Model routing offers clear benefits: lower average latency, reduced cloud spend and finer control over where sensitive data is handled. It also creates new operational responsibilities. Monitoring and governance become essential because a misrouted high-stakes query can create compliance or safety issues.

Some common tensions:

- Cost vs. accuracy: Aggressive routing toward cheaper models saves money but can increase error rates. Measure the business impact of errors, not only the raw error count.

- Latency vs. decision cost: Using a prompt-based router adds an extra model call. If that classifier is slow, the benefit from using a cheaper downstream model is lost. Use embeddings or cached decisions where latency matters.

- Auditability: Where legal or safety requirements exist, you must log which model processed which request and why. Versioned routing rules and stored routing traces help audits and debugging.

Operational hazards include prompt drift (router prompts losing effectiveness as client language changes) and unexpected edge-cases where the router consistently misclassifies a whole class of queries. These can be mitigated by score thresholds, fallback chains and periodic retraining or prompt updates. In some production systems the router also computes a confidence score; low confidence triggers a default to a safer, more capable model.

What comes next and practical choices

Looking forward, routing strategies will become more hybrid and observability-driven. Large-scale research on sparse models—Mixture-of-Experts (MoE) —shows another way to combine many experts inside one model so that only a subset of parameters run per request. Research such as the Switch Transformer papers reported notable training speed advantages for sparse expert models, but those findings are from 2021–2022 and remain primarily relevant for organisations that train very large models or use specialized infrastructure.

For most teams the practical choice remains between building a simple routing layer and using an all-purpose large model. Use routing when at least two of these are true:

- Your traffic profile contains clear, repeatable task categories (e.g., translation, code, short Q&A).

- Saving inference cost matters at scale or latency differs markedly between models.

- You can add logging and a fallback without breaking SLAs.

Simple rollout plan: start with rules and a small classifier, measure the savings and error rates, then compare to an embedding index. Keep a default chain that uses a trusted, capable model. Over time, consider exploring sparse architectures or distillation if you operate your own model training and need more efficient large-model behaviour; note again that MoE research such as Switch Transformer is older than two years and mainly addresses large-scale training trade-offs.

Conclusion

Model routing makes practical sense when tasks vary in difficulty and when cost or latency matter. The choice between a prompt-based LLM router and an embedding-driven router depends on whether you prioritise flexibility or consistent low cost. No router is perfect by default: successful systems combine clear routing logic, observability, safe fallbacks and routine checks against real traffic. With careful metrics and a staged rollout, routing can reduce expenses while keeping answers accurate where it matters most.

Share your experience with routing strategies or ask a question in the comments — practical examples help other teams decide.

Leave a Reply