AI workloads have multiplied traffic inside racks, prompting interest in radio cables for data centers as an alternative to traditional copper or fiber for very short, ultra‑high‑bandwidth links. Terahertz waveguides and directed radio links promise raw capacity where copper hits thermal and signal limits, while keeping latency low for GPU‑to‑GPU fabrics. This article assesses what the technology can realistically deliver today and what operators should test before planning any migration.

Introduction

The growth of large language models and other AI tasks drives much more traffic between GPUs inside a single rack and between neighboring racks than typical enterprise workloads. Copper cables — familiar, cheap and low‑latency — begin to struggle when links must carry 100 Gb/s and above at close distances while also staying cool. Operators and researchers therefore look at unconventional approaches: radio links that use terahertz frequencies, sometimes called THz waveguides, or short, highly directional wireless links inside a data center.

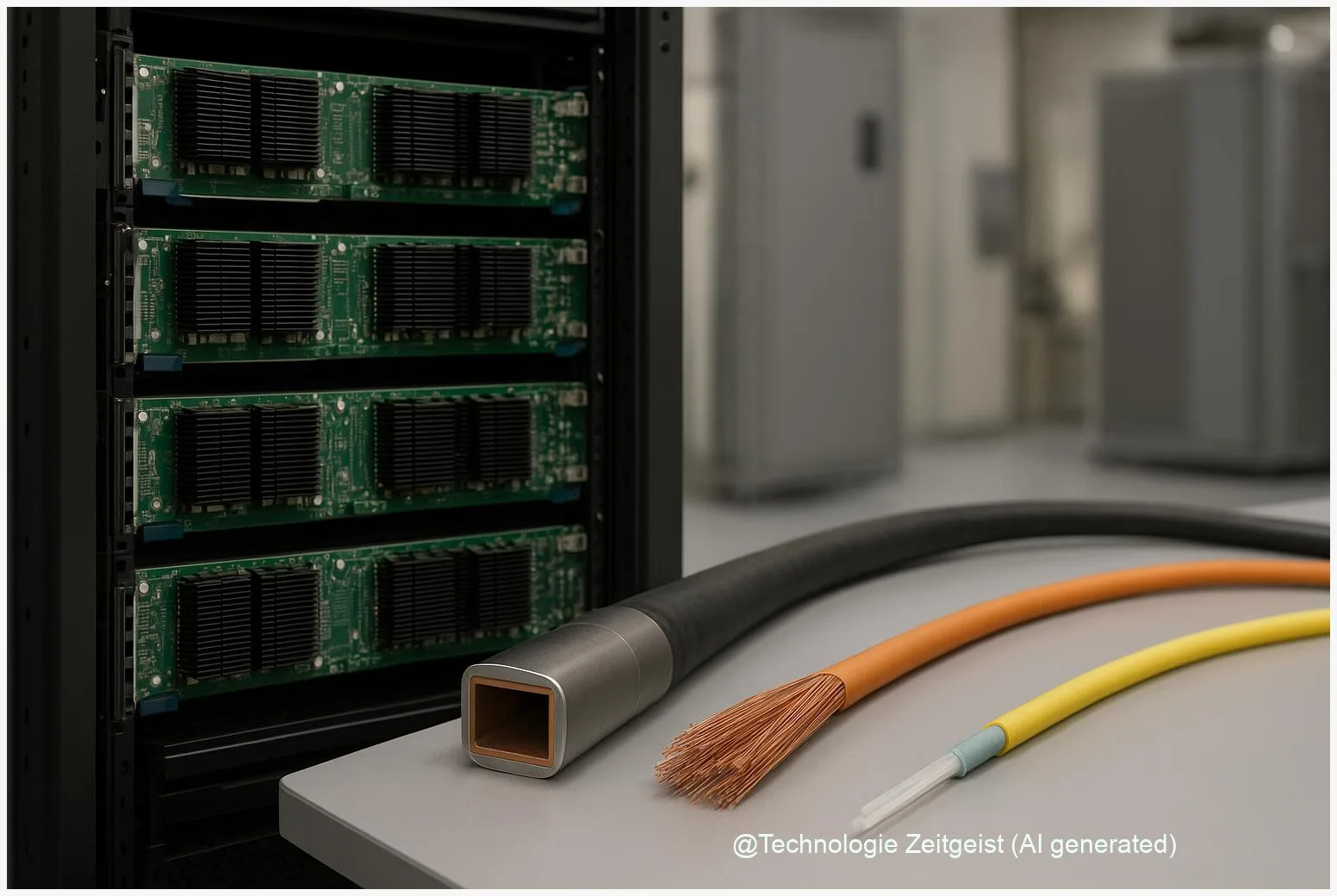

Terahertz here means electromagnetic signals in the sub‑millimeter band (roughly 100 GHz to 1 THz). A waveguide is a physical structure that confines and guides these waves, similar to how an optical fiber guides light. Those two ideas together — a guided terahertz channel — are what people mean when they say “radio cables” in the context of data centers: a conduit that carries radio waves instead of electrical currents or light through glass.

How copper and fiber are currently used inside AI racks

Data centers today mix three main physical link types where GPUs and other accelerators connect: short copper assemblies (direct attach copper, DAC), active copper or retimers, and optical links (AOC or fiber with transceivers). DACs are pairs of copper conductors bundled and terminated in standardized plugs; they are very common for inside‑rack, sub‑meter links because they are inexpensive and have excellent latency.

Optical cables carry light through glass and scale to longer distances and higher aggregate capacity with lower attenuation. But optics involve transceivers that draw power and cost more than passive copper for the shortest links. In practice, operators choose copper inside a server or for very short inter‑GPU wiring and use fiber for rack‑to‑rack aggregation and spine networks.

Copper remains attractive for sub‑meter links, but physical limits show up quickly as data rates and rack density increase.

Three technical constraints explain why copper is being reexamined. First, electrical loss rises rapidly with frequency; at 100 Gb/s+ the effective distance for reliable passive copper often falls into the single‑digit meters or less. Second, active copper options and retimers consume power and produce heat, which is a problem in racks already pushing tens of kilowatts. Third, parallel copper pairs are susceptible to crosstalk when many links run side by side, which raises bit‑error rates (BER) unless complex equalization is used.

| Feature | Copper (DAC/active) | Fiber (AOC/fiber) | Radio/THz waveguide |

|---|---|---|---|

| Typical range | 0.5–3 m (practical) | 3–100+ m | cm to a few m (prototype range) |

| Latency | Very low | Low | Very low (if guided) |

| Power/heat | Low (passive) to moderate (active) | Moderate (transceivers) | Low conduction losses; coupling hardware may heat |

Why radio cables for data centers are gaining traction

Two pressures make radio solutions appealing. One is sheer demand: AI training workloads create internal traffic patterns that resemble a dense fabric of small, very fast flows between GPUs. That favors physical channels that can deliver high raw bandwidth with the lowest possible latency. The other pressure is copper’s physical ceiling: as serial symbol rates and modulation complexity increase (for example PAM schemes used at high line rates), copper attenuation and crosstalk grow rapidly.

Radio cables, especially terahertz waveguides, can open much wider spectral channels than traditional microwave or millimeter‑wave radio. In an ideal laboratory setup, a guided THz link can support hundreds of gigabits up to multiple terabits per second on a single physical path — orders of magnitude more spectrum than a single copper pair can carry. That capacity density is attractive for short, point‑to‑point interconnects inside a rack or blade enclosure where alignment can be controlled.

There are practical models already in the lab and in early prototypes. Metallic hollow‑waveguides, dielectric rods and on‑chip plasmonic guides are three different approaches researchers test. Each trades off loss, mechanical complexity and manufacturability. Typical realistic ranges reported in research discussions are from a few centimeters up to a few meters depending on design; this is enough to cover the distance between adjacent GPU boards or between closely spaced blades.

Using radio in a guided form — a physical conduit that confines the wave — preserves low latency and reduces interference with neighboring links, compared with free‑space wireless. That makes the idea attractive as a layer between a GPU package and its nearest peers, rather than as a replacement for fiber between racks.

Operational trade‑offs: heat, power and reliability

Every new physical layer brings operational implications. Copper’s main operational advantage is simplicity: passive DACs require little management and have predictable behavior. But when active equalizers or retimers are needed, additional power draws and heat generation appear right where cooling is already expensive. In dense GPU racks operators typically see system power densities in the range of around 10–30 kW per rack; adding power‑hungry link electronics can push cooling requirements up.

Optical transceivers also consume power and add inventory complexity, but they are well understood in large deployments: they tolerate distance and electromagnetic interference and scale to high aggregate loads. Radio waveguides change the trade‑off: they do not rely on photonics but they require precise mechanical coupling and often custom transceiver hardware. That custom hardware is where power, thermal design and mean time between failures must be measured before any rollout.

Reliability questions include susceptibility to misalignment, sensitivity to temperature variations, and the durability of connectors in a maintenance cycle. A concentrated bundle of radio waveguides or guided antenna assemblies could be more sensitive to mechanical stress than a fiber patch, so rack design and cable management change. On the other hand, guided radio avoids some optical fragilities such as dust on fiber endfaces and can be engineered for replaceable modules.

For operators, the sensible path is staged testing: perform in‑situ BER and latency measurements under full GPU load, monitor the small‑signal and thermal behavior, and compare measured TCO over a multi‑year horizon. Many recent vendor and standards‑forum discussions also recommend hybrid fabrics — keep copper for the very shortest intra‑node links, use radio waveguides where they clearly beat copper on bandwidth density, and keep fiber for aggregation and spine layers.

What to expect next and how to prepare

Expect incremental adoption rather than an abrupt switch. In the coming two to three years the most likely path is pilot projects inside hyperscaler labs and specialized hardware vendors producing modular THz guide prototypes. Standards work will follow; without interoperable connectors and measurement norms, broad deployment will be slow. Participation in standards groups and early lab testing are therefore strategic steps for operators who depend on predictable upgrades.

From a procurement and operations viewpoint, prepare by updating test suites and RFPs to include BER under bundled cable conditions, thermal imaging during worst‑case workloads, and lifecycle replacement cycles for any active coupling modules. Include acceptance criteria for latency jitter and packet loss under full fabric load. If your team runs GPU clusters, run an A/B test: leave a control set with proven copper/fiber links and compare a small cluster using guided radio cables over several months.

For software and networking architecture, design fabrics that tolerate mixed link properties. That means route planners and schedulers should be able to prefer ultra‑low‑latency paths for tightly coupled training jobs while using higher‑capacity aggregation links for bulk transfers. In other words, plan for heterogeneous interconnects: copper where it is cheapest and simplest, radio where it increases per‑port capacity without unacceptable operational cost, and fiber for distance and aggregation.

Conclusion

AI workloads are changing the balance between latency, bandwidth and operational cost inside data centers. Copper remains useful for the shortest, simplest connections, but its physical and thermal limits are visible as GPU racks become denser and speeds climb. Radio cables based on terahertz waveguides offer an intermediate option: very high raw capacity over the short distances that separate GPUs and blades, with low latency and a smaller footprint than some optical solutions. Practical deployment will depend on manufacturable connectors, proven in‑field reliability and a clear TCO advantage once power and maintenance are counted.

Operators should not rush to replace existing infrastructure, but they should begin disciplined pilots, update test protocols to include thermal and bundled‑cable conditions, and engage with standards bodies. Those steps turn the concept from a promising lab result into an operational option for AI scale‑up networks.

Join the discussion: share your experience with new interconnects or questions about practical testing and deployment.

Leave a Reply