Light‑field photography lets you change the focus and perspective after you press the shutter. A Light‑Field Camera records not only where light lands on the sensor but also the directions the rays travel, which makes digital refocusing and depth extraction possible from a single exposure. This approach keeps creative control in post‑capture while highlighting trade‑offs between spatial detail and directional information.

Introduction

When a photograph shows only a narrow band in focus, that is a result of how lenses map three‑dimensional scenes onto a flat sensor. Lenses concentrate light from a narrow set of ray directions onto each sensor point; changing aperture or focus trades off how much of the scene looks sharp. Light‑field photography addresses that trade‑off by recording extra information about ray directions at the moment of capture.

This is not a magic wand: the camera collects different data and reconstruction requires computation, so results depend on optical design, sensor resolution and software. Still, for anyone who has wished to refocus a picture after taking it or to extract a depth map for augmented reality, the technique opens new creative and practical doors without repeating the shoot.

How a Light‑Field Camera captures more than a photo

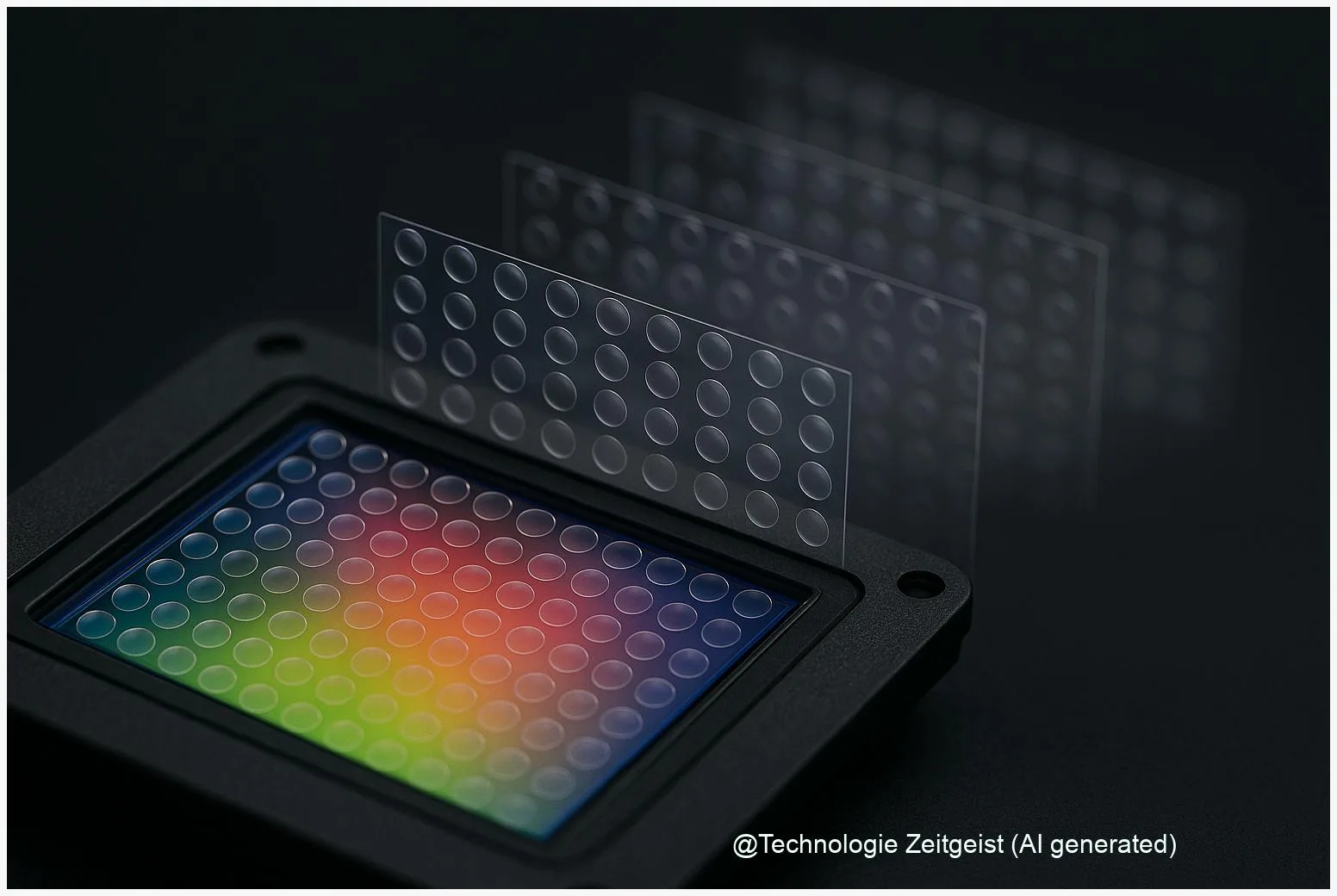

At its core, a light field is a four‑dimensional description of light in free space: two coordinates for position and two for direction. A conventional photograph records a two‑dimensional projection of that higher‑dimensional function. A Light‑Field Camera samples both position and direction at once, usually by placing a micro‑lens array in front of the image sensor. Each small lenslets produces a tiny image of the main lens aperture; pixels under that lenslet record how light arrived from different directions at a specific point in the scene.

That extra directional information is what enables operations that are impossible with a single ordinary photo. Digital refocusing amounts to re‑integrating the recorded rays with a shifted virtual film plane. In the frequency domain this is known as Fourier‑slice photography: the refocus step becomes an extraction of a tilted slice through the 4D spectrum, which can be computed efficiently after suitable preprocessing.

A light field records both where light lands and which directions it came from — that is why the image can be refocused later.

Design choices matter. The classical plenoptic design maximizes angular samples under each microlens, which improves refocus sharpness but reduces native spatial resolution. The focused plenoptic (sometimes called plenoptic 2.0) shifts the microlens plane relative to the sensor so the trade‑off can be tuned: more spatial fidelity for selected depth ranges, or more angular information where refocusing flexibility is needed.

If numbers help: early academic prototypes sampled directions with roughly a dozen to a few dozen pixels per microlens, and commercial measures such as “megaray” by companies later described how many ray samples their cameras could capture. Those figures are a way to compare directional sampling, but they are not identical to conventional megapixels for final image detail.

If a short table clarifies the options, here are typical characteristics:

| Design | Strength | Drawback |

|---|---|---|

| Standard plenoptic | High angular detail → flexible refocus | Lower spatial resolution in final image |

| Focused plenoptic | Better spatial detail at chosen depths | Less angular range for large depth shifts |

| Conventional camera | Max spatial resolution and low noise | No per‑pixel directional information |

What you can do with a light field

Two immediate capabilities stand out: post‑capture refocusing and depth extraction. With the recorded angular samples you can synthesize images that appear as if the camera had been focused at different distances or had used a different aperture. That makes it possible to create a deep focus (more of the scene sharp) or to shift the focus point after the shoot. Modern software also computes a depth map from the directional differences, which can be used for selective editing, realistic relighting, or simple 3D measurements.

There have been consumer attempts to bring these ideas to the market. Early products based on the academic work offered interactive refocus viewers and creative effects; professional solutions applied light fields in film and visual effects where the ability to re‑frame and re‑focus footage can save costly reshoots. In microscopy and industrial imaging the extended depth‑of‑field without closing the aperture is a genuine advantage: you avoid slowing exposure times or stacking many images.

Using a light field often flows like this: shoot with the light‑field camera or rig; run a calibration step so the software maps microlenses to sensor pixels; then render refocused images or depth maps on the desktop. The interface usually looks like a focus slider and an aperture control rather than a complex manual pipeline, because the heavy lifting happens in the reconstruction step.

Practical examples are plentiful: filmmakers use light fields to capture plates for compositing, researchers use them to measure micro‑structures without moving the sample, and hobbyists experiment with DIY microlens sheets on mirrorless cameras to explore the effect on focus and perspective.

Opportunities and practical tensions

The opportunities are concrete: a single exposure can produce multiple useful outputs (refocused images, depth maps, viewpoint‑shifts) that simplify workflows in film, research and AR. Depth information from light fields tends to be dense and locally accurate if the sampling is sufficient, which is why the technique attracts use in cultural heritage imaging, microscopy and computational photography research.

However, the trade‑offs limit general adoption. The fundamental tension is spatial versus angular sampling: the sensor area is finite, so allocating more pixels to direction reduces pixels available for fine spatial detail. That matters especially for prints or high‑resolution displays. Optical diffraction also sets a hard limit once microlenses and pixels become very small.

File sizes and computation are practical hurdles as well. A single light‑field capture contains many more samples than a conventional photo; reconstruction and denoising require more processing time. For mobile or consumer products this means either heavy on‑device computation or cloud processing, both of which add cost and complexity.

There are social and procedural tensions too: producing reliable depth maps requires careful calibration, and automated pipelines can fail in low‑texture regions or under reflective surfaces. For filmmakers and scientists the need for predictable, reproducible results often means additional test shots or more elaborate rigs — not simply a one‑button replacement for existing cameras.

Where the technology is heading

Progress is a mixture of optical engineering and smarter computation. Two routes are active: improving sensor and microlens design to better balance spatial and angular sampling, and using machine learning to reconstruct high‑detail images from fewer raw samples. Neural reconstruction models can infer lost spatial detail and reduce visible artifacts, making lighter hardware designs viable.

Hybrid approaches are promising: dual‑pixel sensors, multi‑camera arrays and burst‑capture methods provide directional cues without a full microlens capture. Combined with AI‑based super‑resolution, these hybrid systems can produce results that approach the flexibility of classic light fields while keeping image detail and noise performance acceptable for everyday use.

For professionals, light‑field capture will likely remain valuable where depth and post‑capture control reduce cost or risk — for example in VFX plates, scientific imaging, and certain industrial inspections. For consumers, expect more selective uses of the concept: smartphone features that synthesize light‑field‑like refocus from multiple frames, and apps that use depth maps to create convincing portrait or AR effects.

If you want to explore the topic practically, look for academic datasets and viewers that let you refocus samples on a computer, or experiment with low‑cost microlens sheets on interchangeable‑lens cameras to see the effect firsthand. The core idea — capturing direction as well as position — remains the most useful mental model when judging new cameras and features in the years ahead.

Conclusion

Light‑field photography changes what a single photograph can represent. By recording directions as well as positions of light rays, a Light‑Field Camera allows refocusing, depth extraction and small perspective shifts after capture. That flexibility brings new creative options and practical benefits in scientific and film work, but it also forces compromises in spatial resolution, processing and calibration.

For readers, the takeaway is simple: the technology trades sensor area for directional information. Where you need post‑capture control and dense depth, light fields can save time and yield outputs that ordinary cameras cannot. Where ultimate spatial detail or low noise is paramount, conventional designs remain preferable. Over the next few years, better sensors, smarter algorithms and hybrid capture methods will make light‑field features more common in professional workflows and selected consumer tools.

Share your experiences with refocus or depth capture and join the conversation—it’s a great way to compare what works in practice.

Leave a Reply