Einleitung

Modern data centers run thousands of servers that generate large amounts of heat. When a server does heavy computation—training a model or serving many users—most of the electrical energy ends up as heat. For operators that means two linked problems: keeping equipment within temperature and humidity limits, and doing so without consuming disproportionate amounts of electricity. The choices made for cooling affect building design, operating costs and how easily a site can feed its waste heat back into the local energy system.

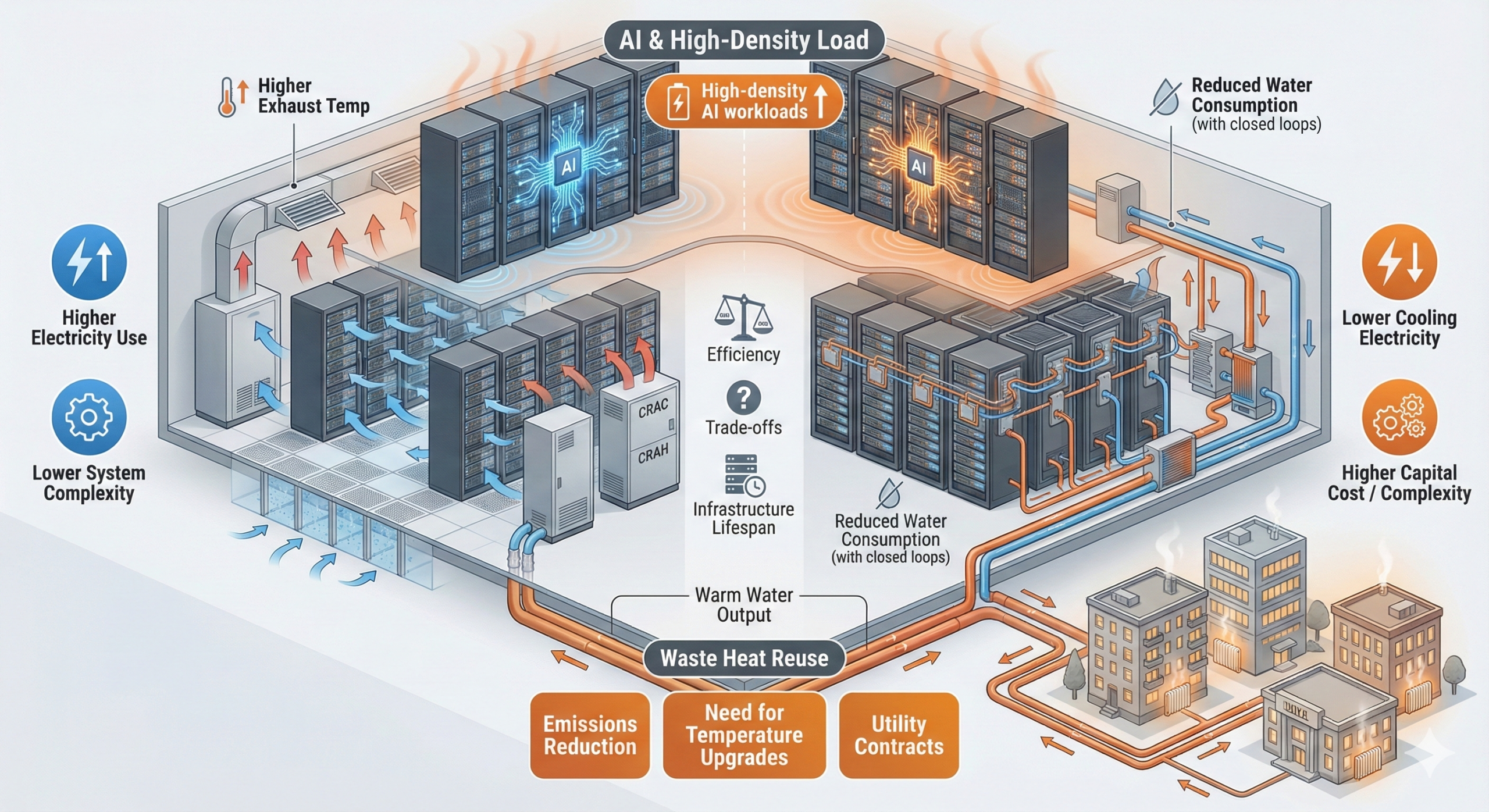

On the surface the options look simple: fans and air conditioners, or cooling with liquids. But each path contains trade-offs. Air cooling is familiar and flexible; liquid cooling can reduce electricity use and enable far higher rack densities. Reusing waste heat for district heating can cut emissions locally, yet requires technical measures—like heat pumps—and long-term contracts to make economic sense. The sections that follow unpack these topics with clear examples and practical comparisons.

How data center cooling works

Cooling a data center means removing the heat that servers produce and moving it away from sensitive components. Traditionally that happens with air: cool air is pushed through racks, absorbs heat, then is carried to chillers or cooling towers where heat is expelled. This approach is simple and well understood, but it becomes inefficient as rack power rises; pushing more air requires larger fans and more fan electricity. The overall efficiency of a facility is often expressed with PUE (Power Usage Effectiveness), which compares total facility power to the power used by IT equipment. A lower PUE means less extra energy spent on cooling and power infrastructure.

Liquid cooling replaces or supplements air by using a liquid—often water-based or dielectric fluid—that makes direct contact with heat-producing components or a cold plate on CPUs/GPUs. Liquids transport heat far more effectively than air, so pump energy can be lower than fan energy for the same heat removal. Common types are:

- Air cooling with raised-floor or contained hot/cold aisles.

- Direct‑to‑chip (D2C) cooling: coolant flows through cold plates attached to processors and other hotspots.

- Immersion cooling: entire server boards or components are submerged in a dielectric fluid (single‑phase or two‑phase).

Efficiency gains from liquid cooling are case-dependent; industry reviews from 2023–2024 report typical reductions in cooling energy between roughly 20 % and 50 % compared with air cooling, depending on rack density and implementation.

To compare approaches, the following short table highlights typical differences at a glance.

| Characteristic | Air cooling | Direct‑to‑chip | Immersion |

|---|---|---|---|

| Cooling medium | Air | Water or dielectric fluid | Dielectric liquid |

| Typical energy for cooling | Higher at high densities | Lower than air for dense racks | Lowest; supports highest densities |

| Retrofit complexity | Low | Medium (depends on servers) | High (requires new hardware/ops) |

Key practical points: liquid cooling lowers the electricity used for fans and chillers, but it adds pumps, heat exchangers and in many cases a need for specialized maintenance practices. Reliability and serviceability are central concerns: operators want to avoid leaks, ensure component replaceability and maintain safety certifications.

How liquid cooling is used in practice

Adoption of liquid cooling follows two main patterns. Hyperscalers and cloud providers often use direct liquid cooling where workloads and rack densities justify the investment. Enterprises and colocation providers may pilot rear‑door heat exchangers or hybrid systems first, because a full immersion rollout means replacing servers or redesigning racks.

A simple practical example: a service provider runs a cluster of GPU racks for machine learning. Each rack draws 30–40 kW. With air cooling, fan power and chiller load increase significantly compared with an older, low-density rack. Installing cold plates on GPUs (D2C) lets the operator remove heat at source and route it to a facility-level heat exchanger. Measured outcomes often include lower fan energy, quieter operation and a higher usable power per rack.

But the operator also faces challenges. Retrofitting means downtime, mechanical work and changes to maintenance procedures. Immersion cooling frequently needs different server designs; while it offers the highest energy savings and simplest airflow (none), it requires new supply chains for replacement parts and trained technicians. For many providers, a staged approach is common: run pilots (1–3 racks) to collect PUE, kWh per IT load and failure-rate metrics, then decide whether to scale.

Safety and standards matter. ASHRAE provides thermal guidelines that remain widely referenced; note that some guidance dates from earlier years and should be checked against recent case studies and vendor data. Long-term data on mean time between failures for immersion systems is still limited in the open literature, so operators should track reliability closely during pilots.

Reusing waste heat: opportunities and limits

Instead of ejecting server heat to the atmosphere, some facilities capture and reuse it. The most visible application is feeding heat into district heating networks that serve homes and businesses. Data center waste heat is often low-temperature—commonly in the range of 25–40 °C at point of capture—so direct injection into high-temperature grids is rarely possible without technology to raise the temperature.

Two typical engineering routes appear in European pilots: use of heat pumps to increase temperature to district heating levels (often 60–80 °C), or combining data center heat with other heat sources in a cascade. Studies and projects in Scandinavia and Central Europe show that project sizes range from a few hundred kW to multiple MW of thermal power. Economic viability depends on connection costs, the local heat price, and the existence of a long-term buyer such as a municipal utility.

There are practical constraints. Low outlet temperatures mean higher capital and operating costs for heat pumps. Grid operators also need stable and measurable inputs; that requires robust metering, agreed service levels and risk sharing in contracts. Regulatory and accounting frameworks for heat trading vary by country and can slow project timelines. On the positive side, when the pieces align—utility partnership, suitable network temperature and a reasonable price—the local emissions benefit can be significant because fossil fuel use in the heat system is avoided.

In summary, waste heat reuse is technically feasible and increasingly practiced in colder climates with strong district heating ecosystems, but it is not a universal solution. Each project needs a clear techno-economic analysis and an operational agreement with the local heat buyer.

Balancing energy, cost and future needs

Choosing a cooling route involves trading three groups of variables: energy consumption and carbon intensity, capital and operating costs, and operational complexity and risk. For example, a greenfield data center designed around liquid cooling can realize lower long-term operating costs and greater compute density, but it typically requires higher upfront investment and careful supplier selection. Conversely, retrofitting an existing hall with rear‑door exchangers or localized D2C may reduce some cooling electricity but will often show a longer payback.

Operators should consider several pragmatic steps. First, quantify current energy flows precisely: measure IT load, cooling energy, and waste heat temperatures over several months. Second, run small pilots with clear metrics and a plan to capture both energy and reliability data. Third, assess the potential for heat reuse with local utilities: what temperatures do they need, what is their willingness to pay, and are grid upgrades required?

Policy and market context matter as well. Higher electricity prices and carbon pricing make liquid cooling and heat reuse more attractive; subsidies for low-temperature heat projects or grants for industrial heat recovery can change the balance. Equally, future workload trends—more AI training jobs, denser GPUs—push sites toward liquid cooling simply because air systems hit practical density limits.

Finally, operators should avoid vendor lock-in. Test interoperability, insist on service level agreements for spare parts and support, and document maintenance procedures. That reduces operational risk and keeps future options open if workload characteristics or local energy markets change.

Fazit

Cooling choices in data centers are not only technical details: they shape ongoing energy use, operating cost and a facility’s ability to contribute useful heat back to its surroundings. Liquid cooling can lower the electricity needed for climate control and enable much higher rack densities, but it brings capital cost, operational changes and new reliability considerations. Reusing waste heat for district heating is attractive where temperatures can be upgraded and commercial terms are available, yet it depends on local networks and contracts. A prudent path for most operators is measurement, small pilots, and close cooperation with utilities and equipment suppliers before large-scale rollouts.

Share your experience: if you’ve worked on cooling or heat‑reuse projects, join the discussion and pass this article on to colleagues.

Leave a Reply