Networks of small, task-focused systems are being combined into larger problem solvers, and the term “AI agents orchestration” describes how those pieces are coordinated. This article outlines what an AI entity is, how agents are organised into teams, and why orchestration matters for reliability, regulation, and business architecture. Readers get practical examples and a short checklist for evaluating agent-based designs.

Introduction

People building modern software increasingly split complex tasks into smaller, specialised components. In AI, those components often look like agents: software modules that perceive inputs, act on them and pursue simple goals. This design can make large systems easier to understand and to update, but it also creates new coordination problems: who assigns work, who merges results, and how do you keep a clear audit trail?

Think of an online service that answers customer questions while also checking inventory, suggesting refunds and flagging potential fraud. Each function can be an agent with a narrow role. Orchestration is the layer that decides which agent runs when, how their outputs are combined, and how failures are handled. The following chapters explain the technical basics, show concrete uses, discuss the trade-offs, and outline plausible next steps for companies and policymakers.

AI agents orchestration fundamentals

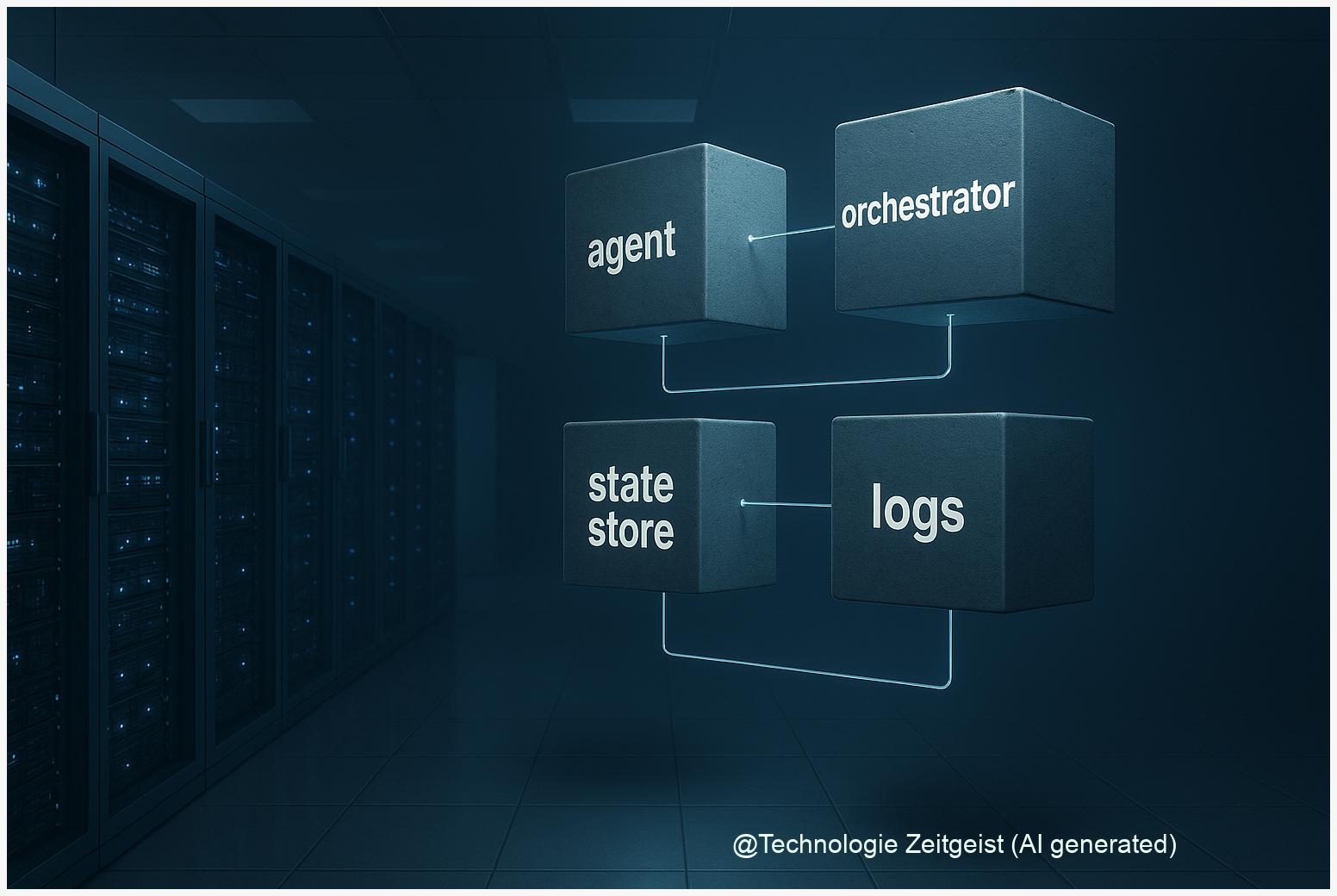

Start with clear terms. An “agent” in computer science is a program that senses its environment and takes actions to meet goals; it may be simple and rule-based or more complex and adaptive. An “orchestrator” is a coordination layer that assigns tasks, sequences steps, and records state. “AI agents orchestration” therefore refers to the methods and systems that control interactions among multiple agents so they solve larger, composite tasks reliably.

Orchestration balances delegation, monitoring and recovery: it is the connective logic that keeps a system predictable even when individual agents change or fail.

Architectural patterns fall on a spectrum. Centralised orchestration gives one controller the full workflow and state; decentralised choreography lets agents follow local rules and discover work through messages. Hybrid designs mix both: a central coordinator for high-level goals and local peer-to-peer negotiation for routine tasks. Each choice affects latency, scalability and the ease of auditing decisions.

Key components in most practical stacks are:

- Task scheduler or workflow engine that sequences work

- State store for persistent context and logs

- Agent adapters (APIs) that normalise inputs/outputs

- Observability and retry logic to handle failures

When a table helps, it clarifies common agent categories:

| Category | Main role | Typical value |

|---|---|---|

| Reactive agent | Responds to events with fixed rules | Low latency, predictable |

| Goal-directed agent | Plans steps to achieve objectives | Flexible, needs more state |

| Coordinator / Orchestrator | Allocates tasks and merges results | Provides audit logs and control |

Technologies used vary from simple message queues to specialised frameworks and orchestration engines. Projects often combine proven tools (workflow engines, persistent stores) with AI-specific layers (policy modules, chain-of-decision logs) to make agent behaviour inspectable and repeatable.

How agent networks are used in practice

Practical deployments tend to start with narrow goals. One common example is automated customer service where separate agents handle intent classification, knowledge retrieval, answer generation and compliance checks. The orchestrator maps a customer message into a sequence: identify intent → fetch documents → draft response → run policy checks → deliver answer. Each step is an agent or a small group of agents.

Another area is enterprise automation. Companies use agent networks to run recurrent tasks such as invoice processing: an extraction agent reads invoices, a validation agent checks data against rules, a payment agent prepares transfer requests and a monitoring agent logs anomalies. Dividing work makes errors easier to trace: if the validation agent flags a field, the log shows exactly which component raised the issue.

In research and robotics, multi-agent systems let specialised robots or subsystems cooperate: mapping, path planning and manipulation can be handled by separate agents, coordinated by a scheduler that resolves conflicts in shared space. For software, specialised tool-use agents (e.g., a web-search agent, a database agent, and a summarisation agent) are combined to create a persistent assistant that can handle multi-step queries.

Open-source frameworks and cloud services offer building blocks: some focus on message passing and distributed execution, others add utilities for agent design and logging. These frameworks help teams prototype faster, but choices about central vs distributed control, retry semantics and state persistence still need careful design to avoid subtle failures.

Opportunities and risks in real deployments

Agent networks make systems modular and easier to update: swapping a single agent is cheaper than retraining an entire monolith. For businesses, this can mean faster feature iteration and clearer accountability about which component made a decision. Operationally, orchestration enables flexible scaling: burst capacity can be applied where agents are busiest.

Yet the approach introduces new risks. Distributed decision-making complicates explainability: when several agents propose conflicting outputs, the orchestrator must choose and record why. Security concerns rise because more components mean more interfaces to secure. Latency can increase when agents make sequential calls, and debugging distributed failures requires rigorous observability.

From a regulatory perspective, systems composed of adaptive agents raise questions under existing rules such as the EU AI Act: obligations often depend on context and use. Many public guidance documents treat AI systems broadly, so agent-based architectures must include robust logging, human oversight options and documentation of training and update pipelines. Note: core regulatory texts like the EU proposal (2021) and frameworks such as NIST’s AI Risk Management Framework (2023) predate this article and are therefore older than two years; they remain relevant for principles but readers should check the latest guidance.

Mitigations that practitioners use include deterministic state logging (a chain-of-decisions), idempotent task design so retries are safe, strict interface contracts between agents, and automated tests that exercise multi-agent flows, not only single agents in isolation. Governance also helps: define roles and responsibilities, require documented failover behavior and run periodic compliance checks.

Where this approach could lead

Networks of specialised agents could reshape enterprise AI architecture by making systems more modular, auditable and adaptable. One plausible path is standardised agent spec sheets: small, machine-readable descriptions of an agent’s capabilities, inputs, outputs and trust boundaries. Those spec sheets would let orchestrators discover and integrate agents dynamically while preserving safety checks.

Interoperability standards could emerge that define message formats, state checkpoints and audit log schemas. If widely adopted, such standards would reduce integration costs and improve transparency. At the same time, certification regimes or sector-specific guidance (for finance or health) may require stricter controls for agent deployment, including documented testing and third-party evaluation.

For individual developers and teams, the practical implication is to think in layers: separate capability design (what an agent can do) from orchestration policy (when and how it is used) and from governance (who is accountable). That separation makes it possible to evolve agents independently while keeping system-wide rules consistent. It also simplifies incident response: logs link a decision back to an agent and the orchestrator policy that allowed it.

Finally, combining agent networks with strong observability, rigorous testing and clear governance could make AI systems both powerful and predictable. However, this outcome depends on careful design choices rather than the mere use of multiple agents. The orchestration layer is where reliability, compliance and user trust are won or lost.

Conclusion

AI agents orchestration describes the practical methods that let many small, specialised AI components work together as a composed system. The approach promises modularity, clearer accountability and scalable development, but it shifts complexity into coordination, logging and governance. For organisations, the sensible route is to prototype with clear state logs, define interface contracts and run risk assessments that map agent behaviours to legal and operational obligations. Those measures make it easier to benefit from agent networks without giving up control.

Join the discussion: share your experience with agent-based systems and how teams solved coordination or compliance questions.

Leave a Reply