AI agents are software systems that take actions on behalf of goals, and they are already part of many digital workflows. This article looks at how AI agents work, what they realistically do well today, and where their limits remain. The term AI agents appears here as a practical label for tools that combine language models, memory, and tool access to carry out multi-step tasks.

Introduction

Many services now claim they use “agents” to automate work. That promise raises two practical questions: which tasks can these systems actually perform, and which cannot? Everyday examples help make the stakes concrete. A travel planner that books flights across multiple sites, a helpdesk assistant that triages email, or a corporate dashboard that pulls data and raises alerts — these are all agentic patterns that people encounter or test today.

At a technical level, most of these systems build on large language models, or LLMs. An LLM is a type of AI model trained to predict and generate text; it helps agents plan in natural language and call tools. The agent concept bundles LLM reasoning with other pieces: memory to remember past steps, tool connectors to act on external services, and a planner that breaks complex tasks into steps. This article uses a practical viewpoint to explain how those layers fit together, to show typical use cases, and to identify concrete safety and reliability requirements that matter for adoption in 2026.

How AI agents work

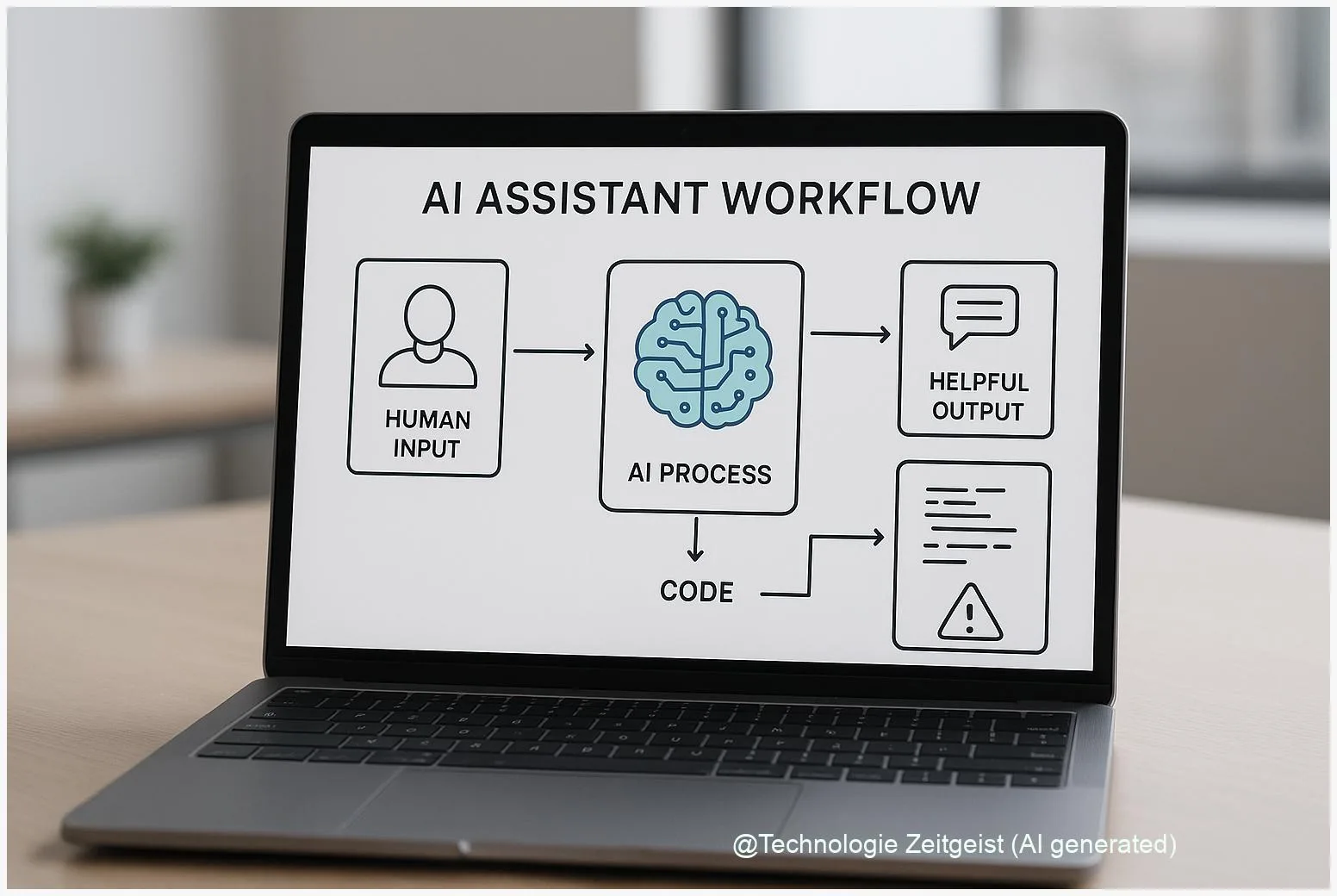

At a glance, an AI agent coordinates several components to move from goal to action. The simplest loop is: receive input, plan a next step, call a tool or produce an output, record the result, and repeat until the goal is reached or a human intervenes. In practice, projects add features: short-term working memory for the current task, long-term memory for user preferences, access controls for sensitive APIs, and a planner module that can break a request into sub-tasks.

A useful mental model is to think of an agent as an application platform that combines three families of capabilities: reasoning in language (the LLM), connectors that perform actions (APIs, web automation, scripts), and persistence (memory, logs). The planner links those capabilities by translating a high-level goal into ordered steps. Where errors occur, they often come from mismatches between a planner’s assumptions and the real world — for example, a planner assuming a website structure that has changed.

“Agents are systems that perceive context, make a plan, and act — and they only improve if their actions are monitored and corrected.”

To make this concrete, the table below lists common agent layers and what they do.

| Layer | Role | Typical example |

|---|---|---|

| Reasoning (LLM) | Interprets goals and suggests next steps | Drafts email text; chooses a data query |

| Tools & Connectors | Carry out actions in external systems | Call calendar API; make a purchase request |

| Memory & Logs | Store context and past outcomes | Remember user preferences; audit trail |

Benchmarks and technical reports from 2023–2024 exposed where these components struggle together. For example, behavior-focused benchmarks such as R-Judge evaluate whether an agent recognises risky or inappropriate instructions; results published in 2024 showed that only top-performing models could reliably flag such cases, with best models reaching roughly 72–74% F1 on the benchmark. That gap matters: it shows reasoning and tool use together create failure modes that do not appear in isolated language tasks.

Where they help: everyday and business uses

AI agents are most useful where work follows repeatable steps, needs coordination across services, or benefits from persistent context. In consumer settings, common examples are personal assistants that manage calendars, draft common messages, or keep shopping lists synced across services. In such tasks the agent reduces friction — it copies information from one app to another, remembers preferences, and confirms actions with a short summary.

In business, agents assist in automating workflows that previously required human handoffs. Examples include automating parts of customer support: an agent can read an incoming ticket, query internal knowledge bases, draft a suggested reply, and either send it or hand it to a human for approval. Another useful pattern is report generation: agents can pull data from multiple databases, draft charts and summaries, and produce a first-pass document that an analyst edits.

These use cases rely on clear success criteria and constrained scope. Good tasks are those where correctness is verifiable and where the agent’s actions have limited downstream risk. Scheduling, data summarization, simple automation of repetitive queries, and information retrieval fit that description. Tasks that need professional judgement, legal responsibility, or significant physical control do not fit without robust human oversight.

Adoption in companies tends to follow a pragmatic path: sandboxed pilot, measurement of time saved or errors reduced, and stepwise integration. Many organisations prefer agents that keep humans in the loop at key decision points rather than fully autonomous systems. That pattern reduces both operational risk and the need for complex liability arrangements.

Risks, limits and safety controls

Agents combine the weaknesses of their parts and add new, compound risks. When an LLM hallucinates — invents plausible‑sounding but false facts — and the agent then uses a connector to act on that false output, the error becomes an automated problem. Other documented vulnerabilities include prompt injection, where an attacker crafts input that causes the agent to override safety checks, and tool abuse, where an agent misuses a legitimate API to extract sensitive data.

Research and policy reports from recent years emphasise three mitigation priorities. First, identification and access control: agents must authenticate actions and operate with least privilege, so a mistaken or malicious instruction cannot reach high‑impact systems. Second, monitoring and auditability: logs and human‑readable traces make it possible to reconstruct decisions and to stop an agent quickly. Third, staged deployment: start in sandboxes and escalate privileges only with clear, measurable performance and safety checks.

Benchmarks such as R‑Judge (EMNLP 2024) examined how well models recognise risky instructions and found significant gaps. Those findings led experts to recommend multiple layers of automated checks, including specialized classifiers that look for dangerous patterns and a human review channel for uncertain cases. Policy papers also call for lifecycle controls: label agents visibly, require time‑to‑live limits for autonomous sessions, and define clear rollback and liability processes.

Finally, transparency about limitations matters for trust. When an agent cannot verify a fact or when a connector failed, interfaces should present clear options: retry, escalate to a human, or abort. Such simple design choices reduce misuse and make it easier to integrate agents into responsible workflows.

Where AI agents still fall short

There are several concrete areas where agents are not ready to replace humans. First, reliability on open web sources is brittle. Websites change, APIs evolve, and parsing structured data often breaks unless constantly maintained. Second, reasoning about novel, high‑stakes problems remains weak: agents can plan plausible steps for a familiar scenario, but when goals require nuanced judgement or domain expertise, they make avoidable mistakes.

Third, accountability and legal clarity lag behind technical capability. Current frameworks for liability assume a human decision maker; agents create grey zones where responsibility is shared between developers, operators, and possibly third‑party service providers. Regulators and institutions are still drafting rules for identification, audit requirements, and liability allocation.

Finally, evaluation is incomplete. Many academic and industry tests focus on narrow tasks; broad, real‑world evaluations with reproducible metrics are still emerging. That gap makes it hard to compare systems and to certify agents for safety‑critical domains such as healthcare, finance, or physical infrastructure. Until those evaluation and governance pieces mature, agents are best deployed where outcomes are reversible and oversight is practical.

Conclusion

AI agents are practical combinations of language reasoning, persistent context, and tool access. They accelerate a range of useful tasks where steps are repeatable and outcomes are verifiable, especially when humans remain in control at key moments. However, the same composition that makes them powerful also creates compound failures: hallucinations combined with automated actions, prompt injection, and brittle connectors are real operational hazards.

For people and organisations evaluating agents, the sensible path is cautious pilot projects, strong access controls, layered monitoring, and clear human escalation rules. Benchmarks and governance reports from 2023–2024 underline that agents need specialized safety checks beyond ordinary content filtering. With careful design and realistic scope, agents can be helpful tools; without these measures they can produce costly mistakes.

Share your experiences with AI assistants in the comments and share this article if you found it useful.

Leave a Reply