Tag: Neural Networks

Deep dives into the architectures and training methods powering modern AI.

-

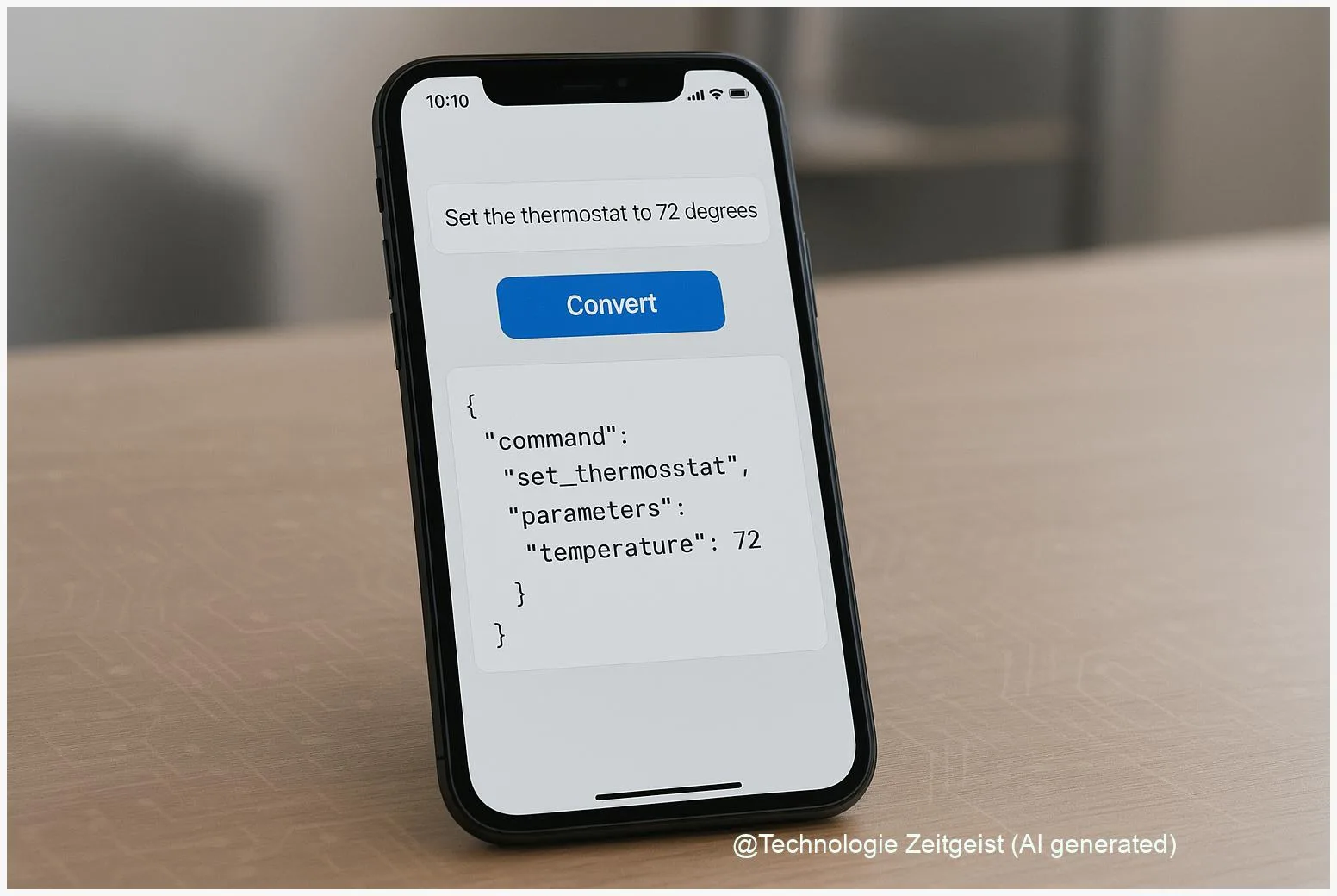

FunctionGemma: Compact function-calling models for on-device AI

FunctionGemma describes the idea of a compact function-calling model that runs on-device and maps text to structured API calls. Small models like a 270M-parameter instance

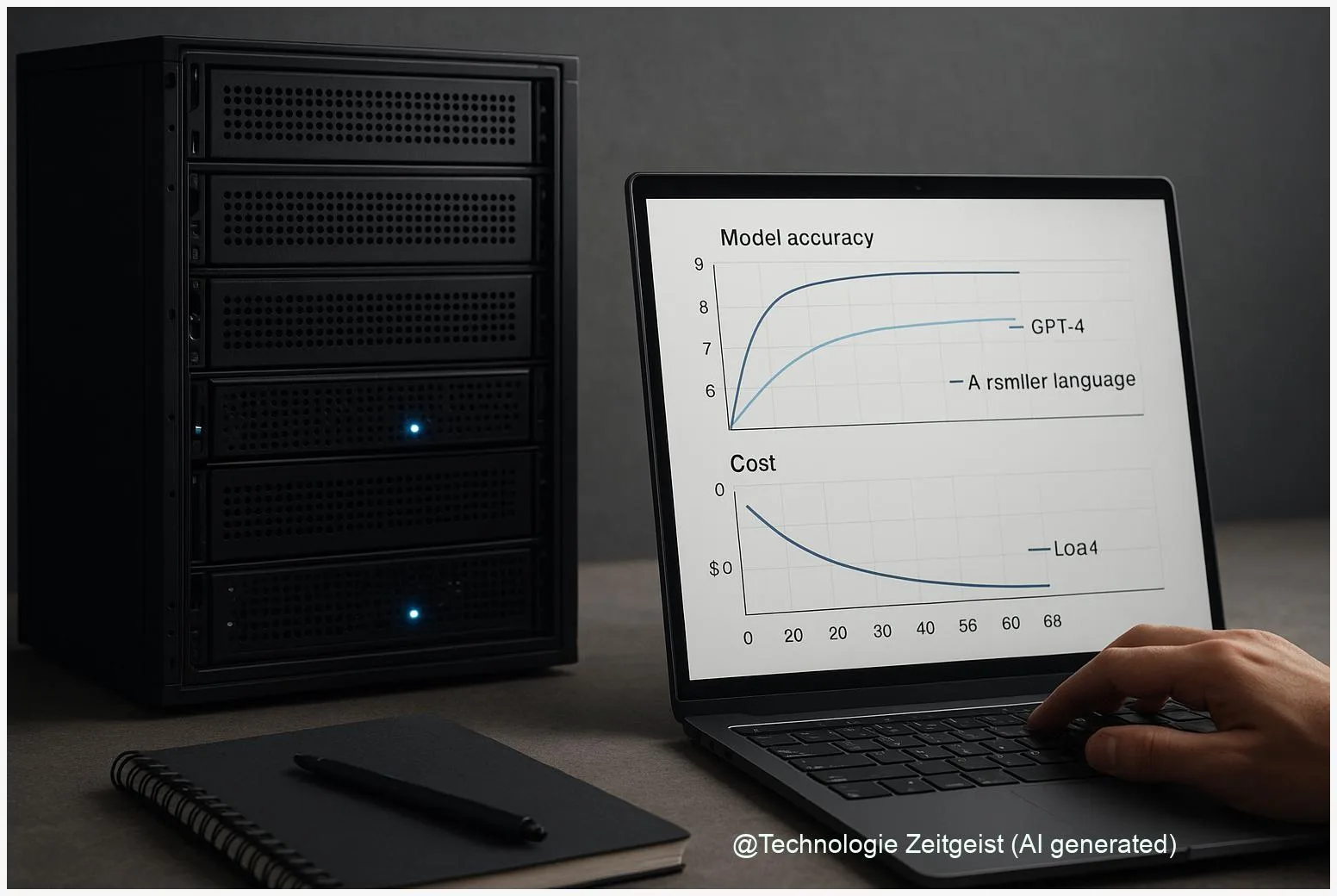

How Small Language Models Cut AI Costs by ~94% — When to Use Them Over GPT-4

Small language models vs GPT-4 can reduce recurring inference costs dramatically for many practical tasks. In some carefully optimized deployments — for example quantized, distilled

Building Self‑Organizing AI Memory with Zettelkasten Graphs

A practical architecture makes it possible to give an AI a long‑term memory that organizes itself. This article shows how a Zettelkasten knowledge graph can

The 14 AI Terms That Defined 2025 — What They Mean

These AI terms 2025 are the vocabulary you will meet in news, product pages and policy debates. They bundle technical ideas such as foundation models,